This article describes the way I designed AVX/SSE support in my homebrew OS.

AVX registers

In long mode, there are 16 XMM registers. These registers are 128bit long. With AVX, these registers are extended to 256 bit and named YMM. The YMM registers are not new registers, they are only extensions. YMM0 is to XMM0 what AX is to AL. Meaning that XMM0 represents the lower 128bit of the YMM0 register.

The xcr0 register enables processor states saving for XSAVE and XRSTOR instructions. The way to set bits in xcr0 is by using the XSETBV instruction. These bits represents feature sets.

- 0b001: FPU feature set. Will save/restore content of FPU registers

- 0b010: XMM feature set. Will save/restore all XMM registers (128bit)

- 0b100: YMM feature set. Will save/restore upper half of YMM registers

Since YMM registers are 256 bit registers, and that XMM registers aliases the lower 128 bits of the YMM register, it is important to enable bit 2 and 1 in order to save the entire content of the YMM registers.

Enabling AVX support

- Enable monitoring media instruction to generate #NM when CR0.TS is set: CR0.MP (bit 1) = 1

- Disable coprocessor emulation: CR0.EM (bit 2) = 0

- Enable fxsave instruction: CR4.OSFXSR (bit 9) = 1

- Enable #XF instead of #UD when a SIMD exception occurs: CR4.OSXMMEXCPT (bit 10) = 1

- Enable XSETBV: CR4.OSXSAVE (bit 18)= 1

- Enable FPU, SSE, and AVX processor states: XCR0 = 0b111

mov %cr0,%rax

or $0b10,%rax

and $FFFFFFFFFFFFFFFD,%rax

mov %rax,%cr0

mov %cr4,%rax

or $0x40600,%rax

mov %rax,%cr4

mov $0,%edx

mov $0b111,%eax

mov $0,%ecx

xsetbv

Context Switching

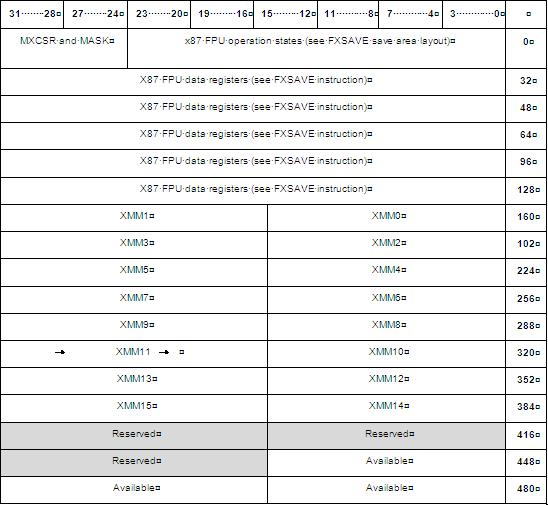

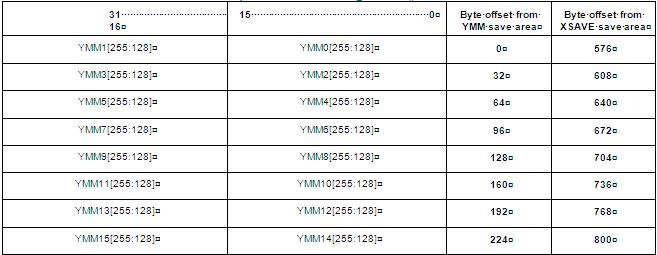

On a context switch, it is important to save the state of all 16 YMM registers if we want to avoid data corruption between threads. Saving/restoring 16 256bit registers can add a lot of overhead to a context switch (we could even wonder if implementing a fast_memcpy() is worth it because of that overhead). Saving/restoring is done with the XSAVE and XRSTOR instruction. Each instruction take a memory operand that specifies the save area where registers will be dumped/restored. These instructions also looks at the content of EDX:EAX to know with processor states to save. EDX:EAX will be bitwise ANDed with XCR0 to determine which processor state to save/restore. In my case, I want to use EDX:EAX= 0b110 to save XMM, YMM, but fpu. Remember, if we set 0b100, we will only get the upper half of YMMx saved/restored. To get the lower half, we need to set bit 1 to enable XMM state saving.

Optimizing context switching - lazy switching

Since media instructions are not used extensively by all threads, it is possible that one thread does not use any media instructions during a time slice (or even during its whole lifetime). In such a case, saving/restoring the whole AVX state would add a lot of overhead to the context switch for absolutely nothing.

There is a workaround for this. In my OS, everytime there is a task switch, I explicitely set the TS bit in register CR0. Everytime a media instruction is executed and that the CR0.TS bit is set, a #NM exception will be raised (Device Non Available). My OS then handles that exception to save/restore the AVX context. So if a task does not use media instructions during a time slice, then no #NM will be triggered so there will be no AVX context switch. The logic is simple.

- Assume that there is a global kernel variable called LastTaskThatRestoredAVX.

- On task switch, set CR0.TS=1

- media instruction is executed, so #NM is generated

- on #NM:

- clear CR0.TS

- if LastTaskThatRestoredAVX==current task, return from exception (still the same context!)

- XSAVE into LastTaskThatRestoredAVX's save area

- XRSTOR from current task's save area

- LastTaskThatRestoredAVX = current task

- Next media instruction to be executed will not trigger #NM, because we cleared CR0.TS

Save area

The memory layout of the saved registers will look like this (notice how highest 128bits of YMM registers are saved separately)